AI answers now influence buyers before they ever click your site.

AI content visibility tracking shows how LLMs mention your brand, which URLs they cite, and what flows through to traffic and revenue. If you only watch rankings, you miss the invisible demand being shaped in ChatGPT, Gemini, Perplexity, and Copilot.

Direct-from-engine data beats simulations. Momentic notes that Bing Webmaster Tools' AI Performance Report is currently the strongest dataset for AI performance.

[Momentic](https://momenticmarketing.com/blog/tracking-ai-visibility-performance)

Tie findings to pages and clusters, not just your root domain. If Perplexity cites your integration guide but skips pricing or onboarding docs, that's a pipeline gap. Close it with targeted updates and CTAs on those cited pages.

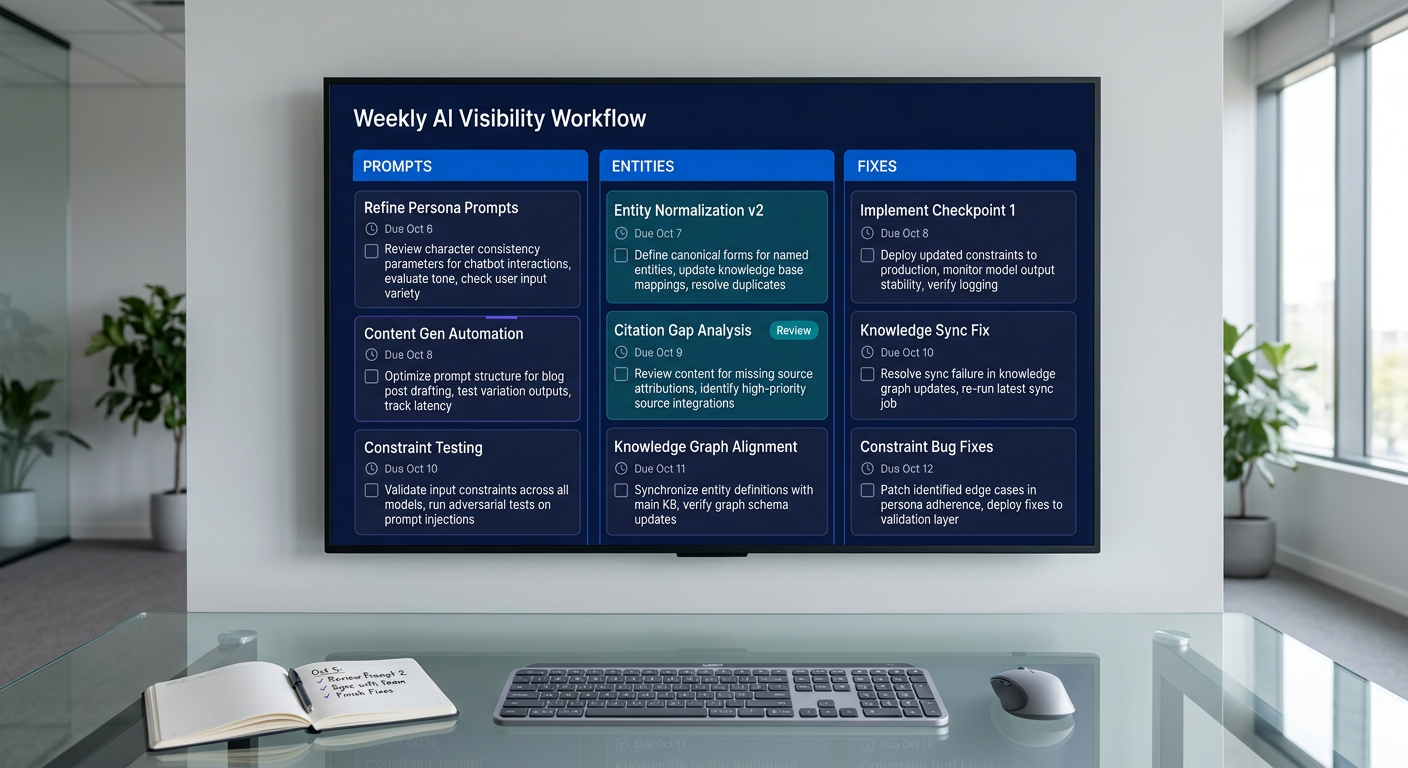

Use a consistent prompt panel. Track 50-150 prompts per cluster across tasks buyers actually run: "how to evaluate X," "X vs Y for [use case]," "best way to integrate X with Y." Validate prompts with 10-20 real sales or support chats each month to avoid optimizing for speculative queries. When you see misquotes or hallucinated claims, fix your entities and sources first.

Cite external corroboration on-page. AI engines reward clear facts with supportive references. Add outbound links to standards bodies, docs, or credible media. As Search Engine Journal warns, bad trackers and sloppy methods will quietly break your analytics; keep tracking clean and export results outside GA4 for reconciliation.

For deeper context, see Why Startups Need an Autonomous SEO Workforce to Compete in .

Bad trackers contaminate GA4 and mislead budget decisions.

Separate discovery from attribution. Use engine-native feeds like BWT's AI Performance, API samplers, and manual prompt panels to capture mentions and citations. Do not inject fake referrers, headless pageviews, or phantom UTMs into GA4. That noise spills into attribution models and distorts ROI.

Label AI-driven visits with server-side UTM governance. Create a strict source/medium taxonomy (e.g., source=AI, medium=referral, campaign=perplexity or gemini) applied at the edge. In GA4 Explore, analyze assisted paths from those UTMs to key events: doc views, signup page, pricing click, and trial start. Keep this separate from classic SEO to see incremental impact.

Use GSC, Ahrefs, and SEMrush for search queries and backlinks, then layer LLM signals on top. When tools simulate prompts, sandbox the output and validate 10% of cases weekly with manual checks. Search Engine Journal has covered how AI visibility trackers can silently poison analytics; the fix is governance.

You win AI visibility by stabilizing inputs.

The 3S Model aligns the work: control Sources (entities, facts, citations), optimize Surfaces (pages, schemas, datasets), and measure Signals (mentions, links, assisted traffic). Start with an entity audit in a spreadsheet: company, product, features, integrations, pricing mechanics, and proof points, each with 2-3 external corroborations. Upgrade Surfaces with FAQ blocks, HowTo steps, pricing math, and outbound references that LLMs can quote. Track Signals weekly for share of AI mentions, citation frequency by URL, and assisted session flow. Expect 2-6 weeks for Surfaces to propagate into Signals; failure modes include noisy prompt sets, mixing simulated and real traffic, and optimizing for generic prompts that never convert.

For deeper context, see The Complete Guide to Mergeflo.com: Autonomous SEO.

Track progression: mention → citation → session → assisted conversion.

Use a tight KPI set you can defend in a board meeting. Start with share of AI mentions on target prompts, then monitor citation frequency by URL, and finally instrument assisted discovery through to trial or demo. Annotate every major content change so you can attribute gains to specific updates.

Keep the table small and the actions crisp. If a metric goes up, either scale the winning pattern or capture more demand. If it goes down, repair the chain: fix entities, strengthen Surfaces, and improve internal linking on cited pages.

| Signal | Where To Measure | Reliability Now | Action If Up | Action If Down |

|---|---|---|---|---|

| Share of AI Mentions | Prompt panel logs | Medium | Expand related prompts/entities | Improve entity coverage + references |

| Citation Frequency (URL) | Engine reports, samplers | Medium-High | Double down on that page + schema | Strengthen structure + outbound sources |

| AI-Referred Sessions | GA4 with UTM standards | Medium | Trace paths, add CTAs | Fix referral tagging, add links |

| Assisted Conversions | GA4 path + attribution | Medium | Allocate content budget to winners | Reposition content to higher intent |

If you need cadence at scale, Mergeflo's approach to autonomous execution keeps prompts, entities, and Surfaces in sync across releases in The Complete Guide to Mergeflo.com: Autonomous SEO: https://mergeflo.com/blog/the-complete-guide-to-mergeflo-com-autonomous-seo

A 90-minute loop beats a bloated dashboard.

• Monday: Pull AI mention and citation diffs for 50-100 tracked prompts. Validate 10% manually. Tag wins and gaps by cluster.

• Wednesday: Ship 2-3 entity or schema fixes and one corroborating external citation outreach. Update FAQs where AI misstates facts.

• Friday: Review GA4 assisted paths and annotate changes. Queue tests: title rewrites, FAQ blocks, or data snippets.

A 3-person growth team running this loop can ship faster than any committee. Keep the scope small and the bar for proof high. If a fix doesn't move Signals within two cycles, revert or try the next tactic.

If you're building autonomous execution, align this loop with an autonomous SEO workforce so tracking and fixes happen without tickets: https://mergeflo.com/blog/autonomous-seo-workforce-startups-2025

Quantify the lift so budget decisions survive scrutiny.

A 3-person growth team tracks 120 prompts across 5 clusters. In 6 weeks, share of AI mentions rises from 12 to 29 across the tracked set, and weekly citations to money pages grow from 6 to 18. GA4 shows AI-referred sessions moving from 160 to 410/week, with 7.5% reaching a signup page and 2.2% converting to trial.

Compute influenced MRR: 410 sessions x 2.2% trial = 9.02 trials/week. At 30% trial-to-paid, that's 2.71 new paid/week. With $140 ARPA, influenced MRR is 2.71 x $140 = $379.4/week, or ~$1,518/month. If content ops cost $2,400/month, payback occurs in month 2 as visibility compounds and assisted paths expand. Cross-check engine-side trends with Momentic's guidance to ensure your prompt tracking reflects real engine behavior.

Assume decay; design for re-optimization on clear triggers.

Trigger a refresh when any of these occur:

• 4-week drop of 25%+ in citation frequency on a money page

• 20%+ decline in share of AI mentions on a tracked cluster

• AI-referred sessions plateau for 3 weeks after shipping fixes

• Product changes to facts, pricing, or integrations

Update process: add missing facts and sources, expand FAQs reflecting prompt variants LLMs answer often, and tighten schema (FAQPage, HowTo, Product). Cut thin sections and merge duplicative pages that cannibalize entity signals. Add or repair outbound citations to authoritative third parties to stabilize Sources.

Watch decay indicators in GA4 paths: longer time to first click from AI-referred sessions, fewer assisted paths that touch pricing or signup, and rising bounce on cited pages. If position-equivalent citations shift to competitors, diff their Surfaces: do they show newer data, clearer steps, or stronger corroboration? Ship the delta within the week.

Short answers that move work forward.

• How do I pick prompts to track? Start with buyer tasks, competitor comparisons, and integration questions. Validate with 10-20 real chats from sales.

• Which tools matter most right now? Use Bing Webmaster Tools' AI Performance where available, GA4 for assisted paths, and your prompt panel for mention diffs.

• How do I stop bad data from polluting GA4? Block headless trackers at the edge, enforce UTM rules, and audit referrers weekly.

• What content updates help AI visibility? Strong entities, precise facts, schema, and external corroboration. Quotes and data tables get cited more.

• How do I prove ROI? Tie citation gains to AI-referred sessions and assisted conversions, then compute influenced MRR like the example above.

You cannot manage what AI hides; build a loop that surfaces it and ships fixes every week.

Deploy AI content visibility tracking, anchor it to clean analytics, and push structured updates tied to prompts that move revenue. The teams that operationalize this will own the invisible half of discovery.